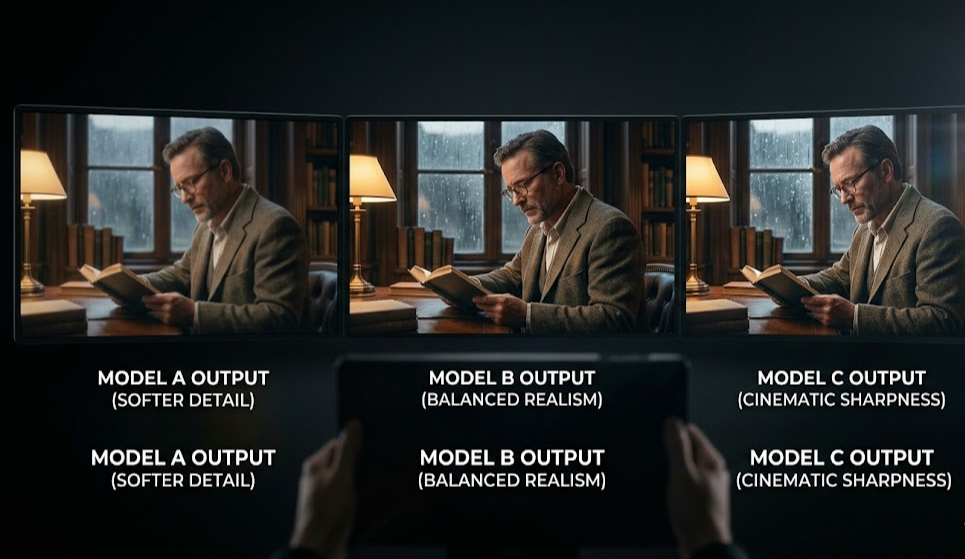

## Kling 3.0 vs Seedance 2.0 vs Sora 2: Which AI Video API Should Developers Use in 2026?The AI video generation market just hit a critical inflection point. **Kling 3.0** (Kuaishou) launched its API on February 5th. **Seedance 2.0** (ByteDance) goes live on February 24th. **Sora 2** (OpenAI) has been reshaping expectations for physics-accurate video.For developers building with AI video APIs, the question isn't "is this possible?" — it's "which model should I actually integrate?" Each of these models takes a fundamentally different approach, and choosing the wrong one for your use case costs time, compute, and quality.This guide breaks down the real differences: specs, API access, Python integration, pricing, and a clear decision framework for 2026.## Table of Contents1. Quick Comparison Table 2. Kling 3.0 API: Motion Mastery 3. Seedance 2.0 API: The Multimodal Director 4. Sora 2 API: Physics & Realism 5. Python API Integration Examples 6. Decision Framework: Which to Choose? 7. Access All Three via One API## Quick Comparison Table`

Here's how the three leading AI video APIs stack up on the specs that matter most to developers:

| Feature | Kling 3.0 | Seedance 2.0 | Sora 2 |

|---|---|---|---|

| Developer | Kuaishou | ByteDance | OpenAI |

| API Status | ✅ Live (Feb 5) | 🔜 Feb 24, 2026 | ⚠️ Limited access |

| Max Duration | 10 seconds | 15 seconds | 12 seconds |

| Max Resolution | 4K / 60fps | 2K / 24fps | 1080p / 24fps |

| Text-to-Video | ✅ | ✅ | ✅ |

| Image-to-Video | ✅ (1-2 images) | ✅ (up to 9 images) | ✅ (1-2 images) |

| Video-to-Video | ❌ | ✅ (up to 3 videos) | ❌ |

| Native Audio | ✅ | ✅ (8 languages) | ✅ |

| Character Consistency | ⭐⭐⭐⭐⭐ (best) | ⭐⭐⭐⭐ | ⭐⭐⭐ |

| Physics Accuracy | ⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ (best) |

| Key Strength | Motion quality + 4K | Multimodal control | World simulation |

| Best For | Character videos, ads | Music videos, creative workflows | Product demos, simulations |

Key takeaway: Sora 2's API access remains limited in 2026 — if you need reliable programmatic access at scale, Kling 3.0 (live now) and Seedance 2.0 (Feb 24) are the two serious developer options.

`## Kling 3.0 API: Motion Mastery at 4K/60fps`Kuaishou's Kling 3.0 arrived on February 5th, 2026, and immediately set a new bar for motion quality and resolution. It's the first commercially available AI video API to offer genuine 4K output at 60 frames per second.

What Makes Kling 3.0 Stand Out

4K/60fps output: The only model in this comparison delivering true high-resolution, high-frame-rate video — critical for professional ad production and broadcast use cases.

Character consistency: Kling 3.0's character tracking is best-in-class. Generate a person in Frame 1, and they'll look identical in Frame 150. No drifting faces, no warped hands.

Omni avatar feature: Upload a single reference image and generate a speaking, gesturing character video — directly competing with HeyGen and Synthesia.

Motion smoothness: Even at complex scene transitions, Kling 3.0 produces noticeably smoother motion curves than previous generation models.

Kling 3.0 API Limitations

Max 10 seconds per clip (shorter than Seedance's 15s)

No native video-to-video input (static image or text only)

Audio generation exists but multilingual lip-sync requires post-processing

Best Use Cases for Kling 3.0 API

Avatar/spokesperson videos for marketing

High-resolution product advertisement generation

Social media content requiring consistent characters

Any workflow where 4K output quality is non-negotiable

ByteDance's Seedance 2.0 is not just a text-to-video model — it's a compositing engine. Its Quad-Modal Reference System accepts text, images, video clips, and audio simultaneously, letting you mix and match inputs to achieve precise creative outcomes that are impossible with any other model.

What Makes Seedance 2.0 Unique

Native audio + video lip-sync in 8 languages: This is the feature that changes everything for international content creators. Record a script in English, and Seedance 2.0 delivers a finished video with synchronized lip movements in Hindi, Mandarin, Spanish, Portuguese, French, German, Japanese, and Korean — without any TTS pipeline. This step that previously required three separate API calls now takes one.

9 image + 3 video + 3 audio inputs: Reference a person from one image, a scene from a second, camera motion from a video clip, and background music from an audio file — all in one generation call.

Video editing (not just generation): Modify existing video without regenerating from scratch. Character replacement, scene extension, style transfer, narrative edits — all via API.

15-second maximum output: The longest in this comparison — critical for 15s Instagram Reels, TikTok clips, and pre-roll ads.

Seedance 2.0 API Limitations

API launches February 24, 2026 — not available yet for production integration

2K max resolution (vs Kling 3.0's 4K) — lower ceiling for broadcast use cases

Character consistency is strong but slightly behind Kling 3.0 in benchmarks

Best Use Cases for Seedance 2.0 API

Multilingual video content (no TTS pipeline needed)

Music video production requiring precise audio-visual sync

E-commerce product videos with controlled motion

Creative agencies managing multi-reference compositing workflows

Video editing/restyling pipelines (not just generation)

OpenAI's Sora 2 is architecturally different from both Kling and Seedance. Where those models optimize for motion quality and control, Sora 2 was trained to understand how the physical world works. Ask it to simulate a glass breaking, water pouring, or cloth blowing in wind — and you get physically accurate behavior that other models can't match.

What Makes Sora 2 Different

Physics accuracy: Best-in-class for simulation — material properties, gravity, fluid dynamics, lighting interactions. Critical for product visualization, architectural rendering, and scientific visualization.

Temporal consistency: Objects maintain correct position and scale across the entire video duration.

Prompt adherence: Strong instruction-following for complex multi-element scenes.

Sora 2 API Limitations (Important for Developers)

⚠️ Limited API access: As of February 2026, Sora 2 API access remains restricted. Rate limits are tight, and waitlists exist for high-volume usage — making it unreliable for production-scale applications.

1080p maximum (no 4K option)

No multimodal input (text and basic image-to-video only)

Higher cost per second than Kling 3.0 at comparable quality tiers

Best Use Cases for Sora 2 API

Product visualization requiring accurate physics (cosmetics, food, materials)

Architectural and interior design walkthroughs

Scientific or educational simulations

High-realism brand videos where physics accuracy justifies the premium

All three models are accessible through the ModelsLab unified API — one API key, one endpoint format, consistent response schema. Here's how to integrate each in Python:

Kling 3.0 — Text-to-Video

import requests `API_KEY = "your_modelslab_api_key"response = requests.post( "https://modelslab.com/api/v6/video/kling_v3", headers={"Authorization": f"Bearer {API_KEY}"}, json={ "prompt": "A professional woman presenting at a tech conference, photorealistic, 4K", "negative_prompt": "blurry, low quality, distorted", "duration": 8, # seconds (max 10) "resolution": "4K", # "1080p" or "4K" "fps": 60, "character_consistency": True } ) data = response.json() print(f"Video URL: {data['output'][0]}") ``Seedance 2.0 — Multilingual Video (Available Feb 24)

import requests `API_KEY = "your_modelslab_api_key"response = requests.post( "https://modelslab.com/api/v6/video/seedance_v2", headers={"Authorization": f"Bearer {API_KEY}"}, json={ "prompt": "A product spokesperson explaining the new feature, confident tone", "init_image": "https://example.com/spokesperson.jpg", # reference image "audio_url": "https://example.com/script_english.mp3", # source audio "target_language": "hi", # Hindi lip-sync, no TTS needed "duration": 12, "resolution": "2K" } ) data = response.json() print(f"Multilingual video: {data['output'][0]}") ``Unified Access — Auto-Select Best Model by Use Case

def generate_video(use_case: str, prompt: str, **kwargs) -> str: """Route to the optimal video model based on use case.""" model_map = { "character": "kling_v3", # Best character consistency "multilingual": "seedance_v2", # Native lip-sync in 8 languages "physics": "sora_2", # Best physics simulation "music_video": "seedance_v2", # Multimodal audio control "4k_ad": "kling_v3", # 4K/60fps for premium ads } model = model_map.get(use_case, "kling_v3") response = requests.post( f"https://modelslab.com/api/v6/video/{model}", headers={"Authorization": f"Bearer {API_KEY}"}, json={"prompt": prompt, **kwargs} ) return response.json()["output"][0] `# Usagevideo_url = generate_video("multilingual", "Product demo in Hindi", init_image="product.jpg", target_language="hi") ``All endpoints share the same authentication, error handling, and webhook patterns — so switching models is a one-line change. See the full API documentation →

`## Decision Framework: Which AI Video API to Choose?`The "best" model depends entirely on your use case. Here's a practical framework:

Choose Kling 3.0 API if:

You need 4K/60fps output for premium ad production

Character consistency is your #1 priority (avatars, spokespersons)

You're building avatar/spokesperson video features competing with HeyGen

You need production-ready API access right now (it's live)

Choose Seedance 2.0 API if:

You're building multilingual video workflows (8 languages, no TTS step)

You need to accept multiple reference inputs per generation

Music video, creative agency, or e-commerce ad generation is your focus

Video editing (not just generation) is part of your pipeline

You can wait until February 24th for API access

Choose Sora 2 API if:

Physics accuracy is mission-critical (product visualization, scientific content)

You're in the waitlist and have confirmed API access at your required volume

Your use case is niche enough that limited rate limits aren't a production blocker

Use All Three (Recommended for platforms):

If you're building a video generation platform or creative tool, the answer is often "all of the above." Route requests to the optimal model by use case: Kling for character ads, Seedance for multilingual content, Sora 2 for physics-accurate brand videos. ModelsLab's unified API makes this routing trivial — see the code example above.

`## Access Kling 3.0, Seedance 2.0, and Sora 2 via One API`Managing API keys, rate limits, and response formats across three different providers is unnecessary overhead. ModelsLab provides unified access to all three models — plus 600+ additional AI models for image generation, audio, and LLMs — through a single API key and consistent endpoint structure.

✅ Kling 3.0: Live now — start generating 4K character videos today

✅ Seedance 2.0: Available February 24th — pre-register for access

✅ Sora 2: Access via ModelsLab waitlist

✅ 600+ models: Stable Diffusion, SDXL, FLUX, Kling, Sora, Seedance, and more in one place

Try it free: Get your API key at ModelsLab →

Or generate videos instantly in the browser at mstudio.ai — the unified creative studio powered by every top AI video model.

`## FAQ`Is Seedance 2.0 API available now?

Not yet — ByteDance is launching the Seedance 2.0 API on February 24th, 2026. You can access it through ModelsLab starting on launch day.

Can Kling 3.0 generate 4K video via API?

Yes. Kling 3.0 is the only AI video API currently offering 4K/60fps output. It's available now through the Kling v3 endpoint on ModelsLab.

Does Seedance 2.0 support multilingual lip-sync natively?

Yes — this is Seedance 2.0's breakthrough feature. It handles audio-video lip-sync in 8 languages (English, Hindi, Mandarin, Spanish, Portuguese, French, German, Japanese) without requiring a separate TTS pipeline.

What's the difference between Kling 3.0 and Seedance 2.0?

Kling 3.0 excels at character consistency, 4K output, and motion quality. Seedance 2.0 excels at multimodal control, multilingual lip-sync, and video editing workflows. Both have full API access; choose based on your primary use case.

Why is Sora 2 API limited compared to Kling and Seedance?

As of early 2026, OpenAI has not opened Sora 2 to unrestricted API access. Rate limits are tight and waitlists exist for high-volume usage — making it less practical for production applications that need consistent throughput. Kling 3.0 and Seedance 2.0 offer more accessible API programs for developers.

`